I fell down an 8-bit rabbit hole 45 days ago, looking back at how the computer industry evolved from the Apple ][ in 1997 into the 1980s, up to the first pen computers of 1991. Last week I found the needle hiding in this haystack (mixing metaphors) on why evolution took that path, and now it’s time to put together all the learnings into an alternative history of what might have been, given just a few changes to history.

Change #1: A culture of iteration. My biggest epiphany from this research is how much tech culture has changed. In the intervening 45 years we learned that the best path to success is small, incremental change coupled with a lot of experimentation.

Change #2: No Motorola 68000. Without that chip launching 1979, there wasn’t an obvious next choice for Apple and others to choose. I’m thus basing this alternative history on the idea that Apple bought a 6502 license from MOS or WDC, partnered with a fab, and extended that chip to their needs.

Apple ///

With those rules in place, we branch off from history in 1978 when Apple was still working on the ][+ and ///. Given the culture of iteration, Apple no longer expected the // to lose all consumer interest in a year or two. Instead, the strategy was long-term, focusing the // for gamers, hobbyists, and home use, while the /// was targeted for businesses. That removed the need for the /// to be backward compatible, and as such, the /// traded color graphics for higher-resolution, with 40×24, 80×48, and a new 100×60 text mode, and with 600×400 black and white high-res graphics (or on the monitors of the day, green and black).

To keep this business line targeted to businesses, out went the joystick port, and with the launch of the floppy disk, out also went the support for cassette tape storage.

Back again to the idea of iteration, this initial /// had far fewer changes from the ][, which allowed it to be launched in 1978 rather than 1980, continuing the annual launch cycle that Apple followed in 1976 and 1977. In the real timeline, Apple would not do annual launches again until the 21st Century and the iPhone.

Apple /V

Keeping the design simple, that alternative /// was a modest success. Successful enough that Apple’s next release in 1979 was the model /V rather than the ][+. For my own simplicity, let’s leave those changes unchanged from the history books except that the /V’s CPU was sped up by 50% to 1.5MHz.

Apple V

Four releases in four consecutive years, each selling above expectations, the Apple V was back for businesses in 1980. With two years to work on upgrades, Apple had time for more significant changes. This release introduced ProDOS and double-sided, 284k floppy disks.

More importantly, the /// included the new, 24-bit 6502 running at 2Mhz, with 96K of RAM minimum, upgradable to 1MB. Aesthetically, the only change was two two bays for disk drives, just like the IBM PC that was still a year away from launch.

With Visicalc for spreadsheets, AppleWorks for word processing, and the ability to grow beyond 64K, that PC from IBM was a far smaller success. Certainly no one was fired for buying IBM, but Intel had to scramble for a 80186 to match the power of the 652402.

Apple V/ (Pipp!n)

Back to the home market, Steve and Steve, two former employees of Atari were jealous of Atari’s 2600. Rather than the V/ being an upgraded /V, the 1981 release from Apple in 1981 was a consumer device without a keyboard, with no upgrade slots, but with two game controllers, a slot for a software cartridge, plus a socket for an external disk drive and the keyboard from the V.

Internally, this was an Apple /V with a sprite engine added to its unique 65G02 (G for graphics), repackaged as a video game console (and the same 24-bit addresses as the ///). Given the thousands of games already available for the // and /V, this was highly competitive with the Atari 2600. And given plugging in a keyboard and disk drive turned this into a home computer, it outsold the Atari 2600.

In this alternative history Nintendo’s NES/Famicom was only popular in Japan, and Mario and Zelda launched on the Apple Pipp!n.

Meanwhile, the /V did get an upgrade too. Those 24-bit 6502s for the /// were all tested. As with all chips, some had flawed and didn’t work, but some worked just fine at 1.5MHz but not at 2MHz. The /V upgrade moved to 1.5MHz 652402s, and the standard amount of RAM upped to 72K.

Apple V//

Lucky number seven. Back in 1979, Steve Jobs and a few others at Apple took a visit to Xerox PARC. That is the trip where they learned about graphical user interfaces and the mouse.

Rather than spend the next four years building the Lisa from scratch, Apple simple launched the MouOS as the big story for the Apple V// in 1982. Thus the age of windows began.

Couple that launch with a 4MHz 652416, Apple’s first native 16-bit CPU, and for the first time in the industry, options for buying even faster CPUs, 5MHz, 6MHz, and the screaming 8MHz. (Before scoffing at 8MHz, do keep in mind that a 4MHz 65C02 in the real timeline can out-perform a 10MHz Motorola 68000, the former being the chip in the real-world //c, and the latter being the chip in the original Macintosh. With bitblt built into the alternative-world chip, that UI would have been snappy).

Apple V/// and V///c and Pipp!n //

In 1983 customers were surprised to see not just one new computer, but three. The V/// still kept the original design of the ][, but it too now sported a choice of speeds, 2Mhz, 3Mhz, and 6MHz. The Pipp!n // was expected, with better graphics and a faster CPU, but the same basic machine that was still flying of shelves (ET the Extraterrestrial wasn’t until 1984).

The “just one more thing” that year was a compact, portable version of the V///. Apple took this upgrade cycle to do more hardware integration, lower the chip count, lower their costs, and split those savings with customers so that their computers were not just competitive with IBM and its clones, but more profitable at those prices.

What customers didn’t see is that the V/// and Pipp!n // also had half the chips, as would all future models. Apple’s advantage over the rest of the computer market was the ability to design its own chips. In the real timeline that lesson didn’t get learned until the iPhone, and starting in 2021 in the Macs too.

(Lowering costs through integration was the motivation for the original timezone //c.)

No Macintosh

With MouOS a big hit, there never was a need for a pirate flag flying team off the main campus and no Macintosh. With Apple leading the computer market, there wasn’t the need to hire anyone from Pepsi to run the company. Whether Jobs matured enough to do that by 1984 is unlikely, but with the annual upgrade cycle, with computer lines for home, business, and gaming, Steve was kept busy ensuring the designs were glorious and the software experience insanely great.

By the mid-1980s, the Apple chips moved to 32-bit addresses and 32-bit registers. Apple being Apple, these chips integrated networking drivers, graphics processors, and more, making them SoCs years before that was an industry norm.

Apple LXV

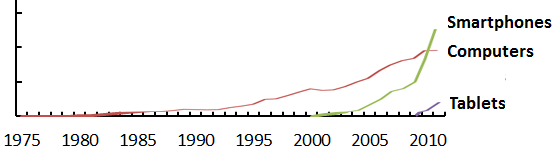

This story ends 45 years later, in 2022, with the Apple LXV (ignoring the fact that no marketing naming scheme has ever lasted that long). I don’t expect that my Apple notebook, tablet, and phone of 2022 in this alternative history would be significantly different from what I’m surrounded by while writing this post.

What I wonder is what that CPU would be like. Apple was a key partner in making ARM a commercial success, so ARM probably wouldn’t have existed. Apple being Apple, they wouldn’t have shared their 6502 upgrades with others.

Intel would have had fewer sales with far stronger competition. Given the history of defections in hardware companies (e.g. Fairchild, Intel, Zilog, and MOS), probably someone from Apple would have read the paper about RISC from Berkeley in the early 1980s, launched a competitor by the late 1980s, that Apple would likely have acquired in the 1990s to add to its lead in chip design.

Conclusion

I doubt this alternative timeline would have left much meaningful change to the technology we use today. Intel and Microsoft would likely be smaller, but over four decades Apple could have easily lost their lead to Wintel for many reasons. Or maybe Microsoft would have been an even bigger success, using that iteration culture to lead all software development, from BASIC to NOTSOBASIC to COMPLEX## while also still focusing on Office, all without the complexity and burden of having to develop Windows.

I’ll leave Microsoft’s alternative history for the future.